Problem Statement

Lack of a Platform that Allows Persons with Varying levels of Sensorial Abilities to communicate via their preferred choices of Language and Modality

The choice of language and modality (haptic/auditory/ visual/ textual) for communication varies with every individual and is primarily dependent on their sensorial abilities. With those who do not share the same sensorial abilities, a major obstacle in communication is the difficulty associated with learning the preferred language and modality of their counterpart.

Solution

To create a platform that utilises a user's available senses to communicate with another user through their prominent sense

To create a platform that utilises a user's prominent/available senses to effectively convey information (via sensory substitution) and to reintroduce already familiar communication techniques such as smart intelligent tools that can be used to interact with the outside world.

The platform will consist:

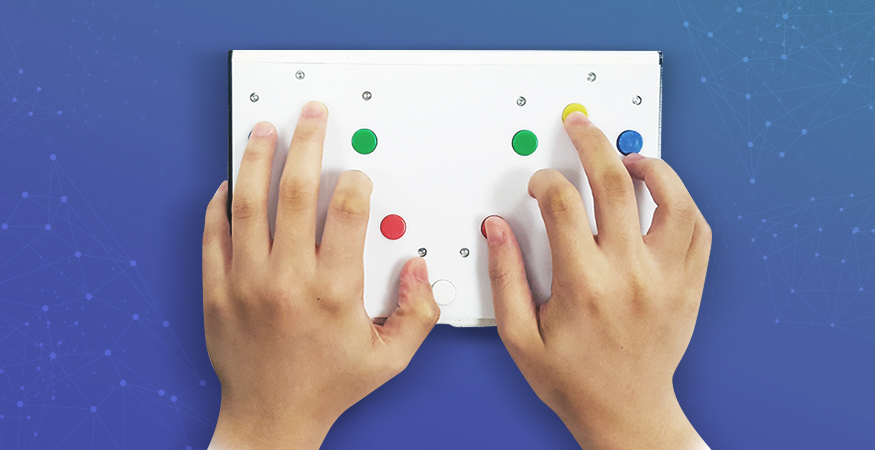

1. A computer-mediated device that uses haptic feedback to facilitate two-way communication, extending and automating the use of Finger Braile

2. A mobile software application that converts text, image, speech, and haptic data into a form that can be presented in various languages and sensory modalities to cater to a wider range of users.

Outcomes

The Persons with Sensory Disabilities are able to communicate effectively and accurate with people around them.

-

Grantee

Keio-NUS CUTE Center -

Beneficiaries

Persons with Disabilities & Caregivers

more about grantee

Started in 2009, Keio-NUS CUTE (Connective Ubiquitous Technology for Embodiments) centre is a joint collaboration between National University of Singapore (NUS) and Keio University, Japan and partially funded by a grant from the National Research Foundation. The centre focuses on fundamental research in interactive digital media which addresses the future of interactive, social and communication media.